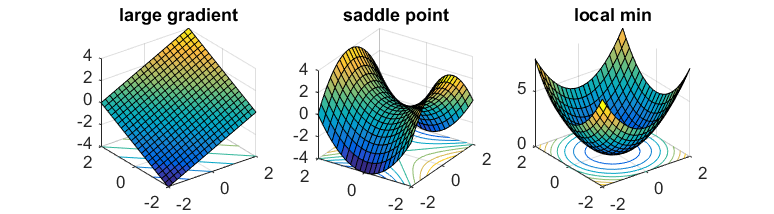

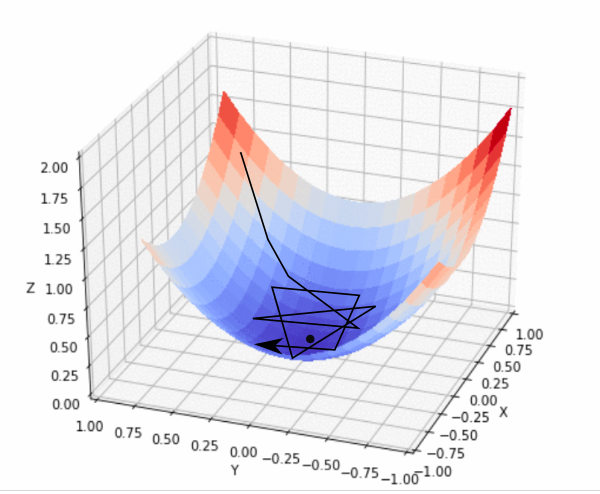

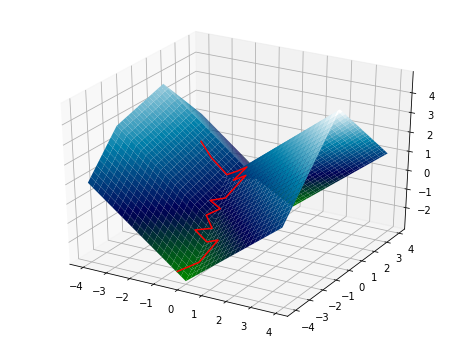

Animations of Gradient Descent and Loss Landscapes of Neural Networks in Python | by Tobias Roeschl | Towards Data Science

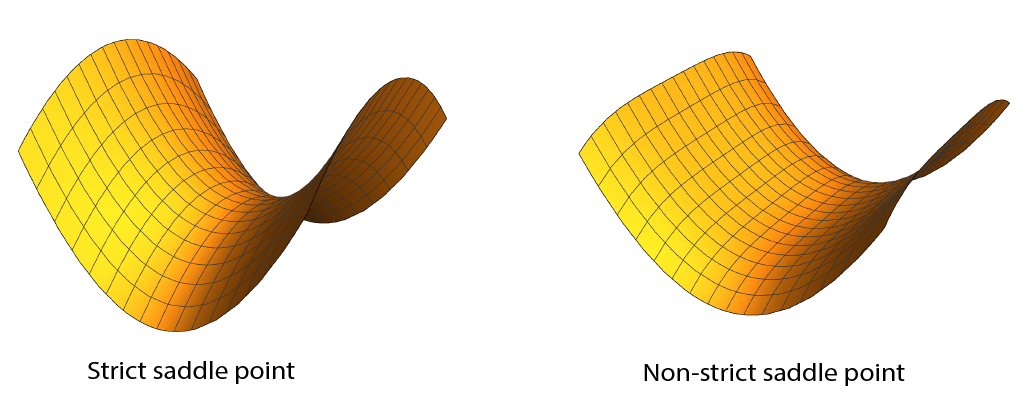

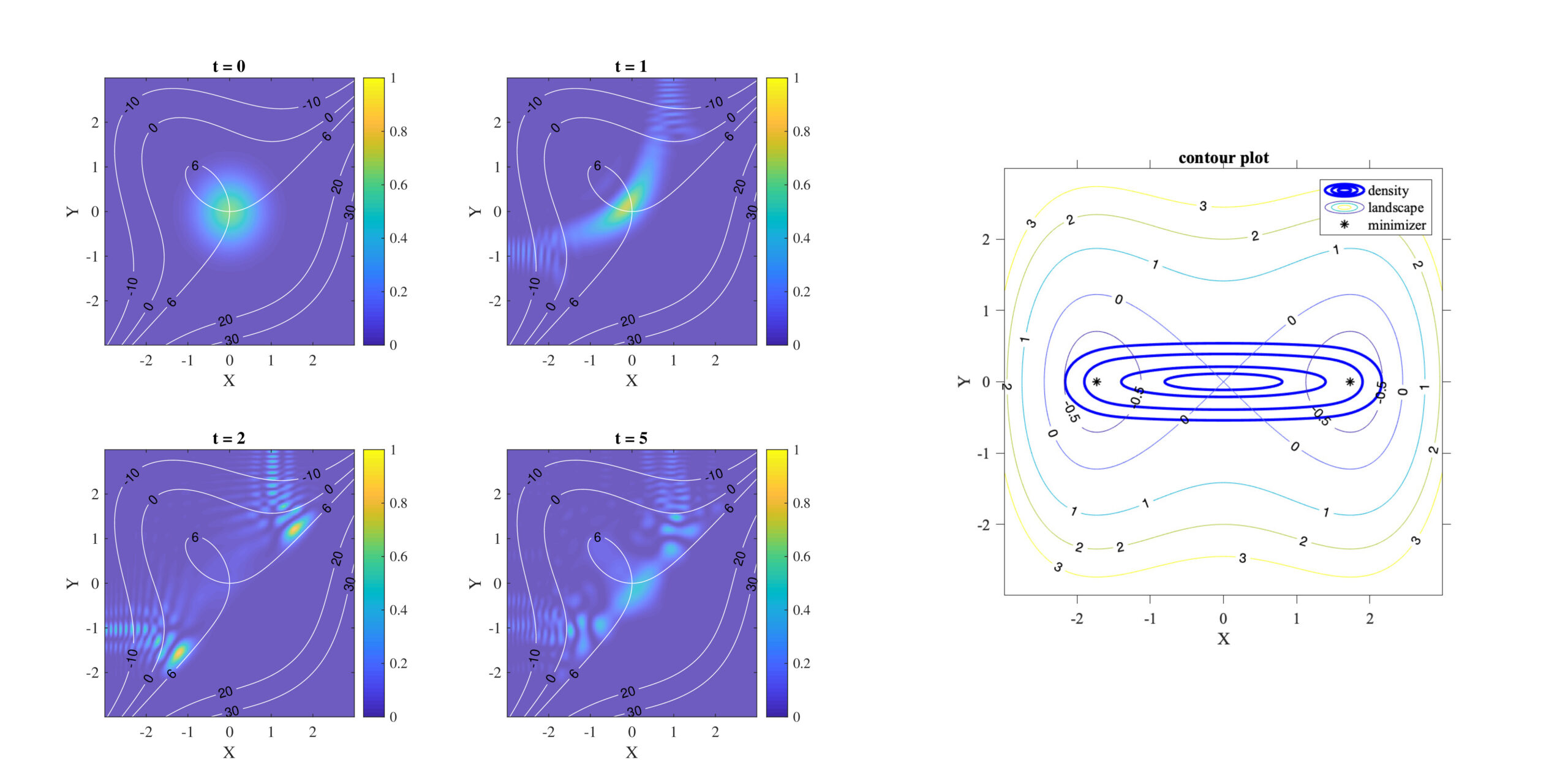

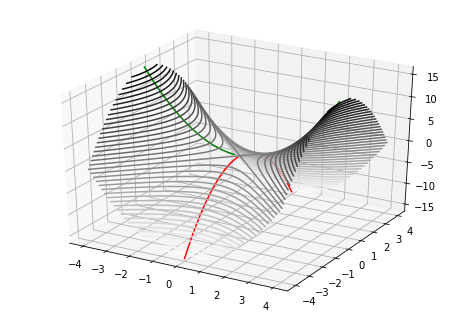

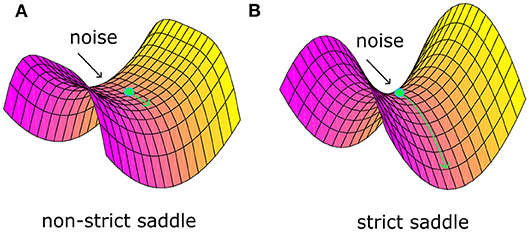

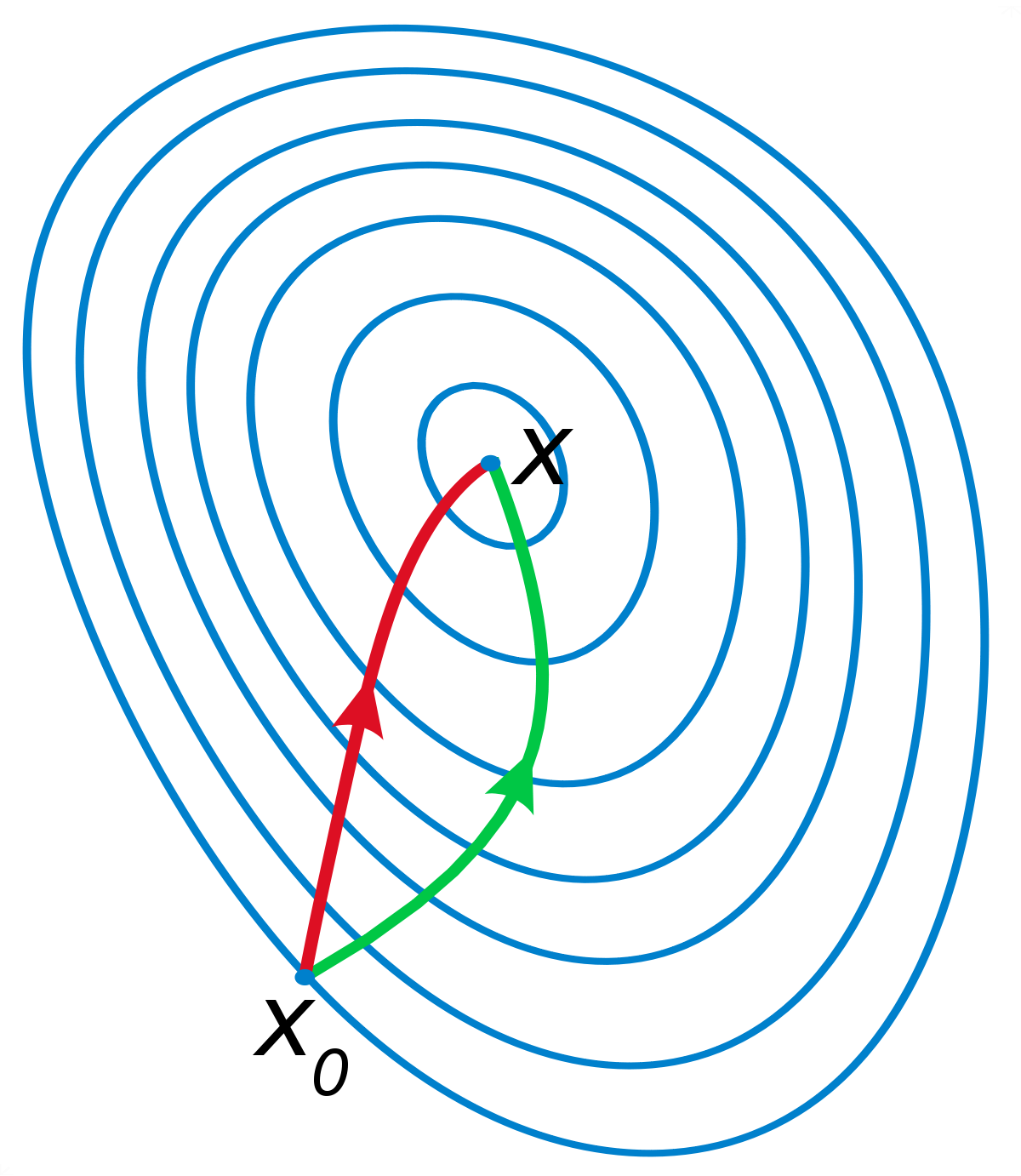

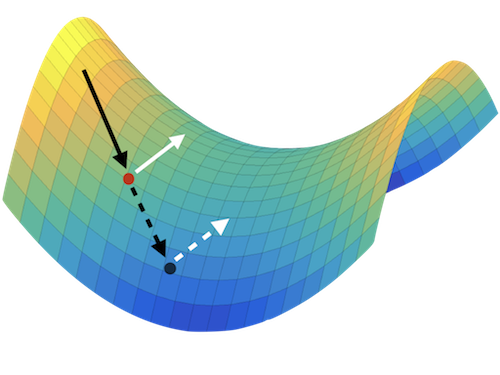

Gabriel Peyré on X: "Gradient descent is inefficient to find saddle point (Nash equilibrium) for min-max games, because of spiralling behaviour. Beware when training your GANs … https://t.co/KKKGQ4q9JA https://t.co/xjgLuUHPMU https://t.co/hYYH0xWUXP" / X

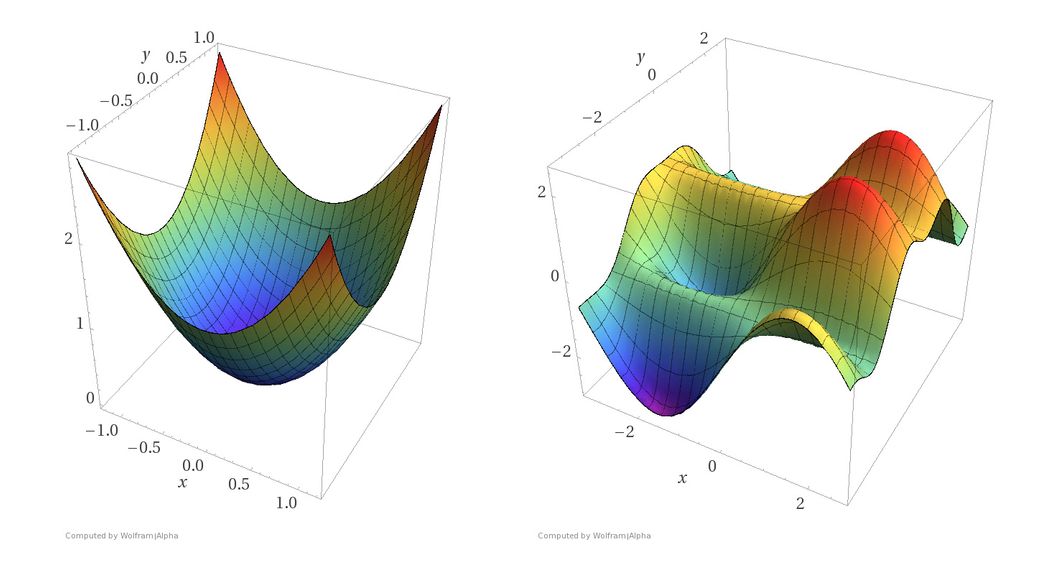

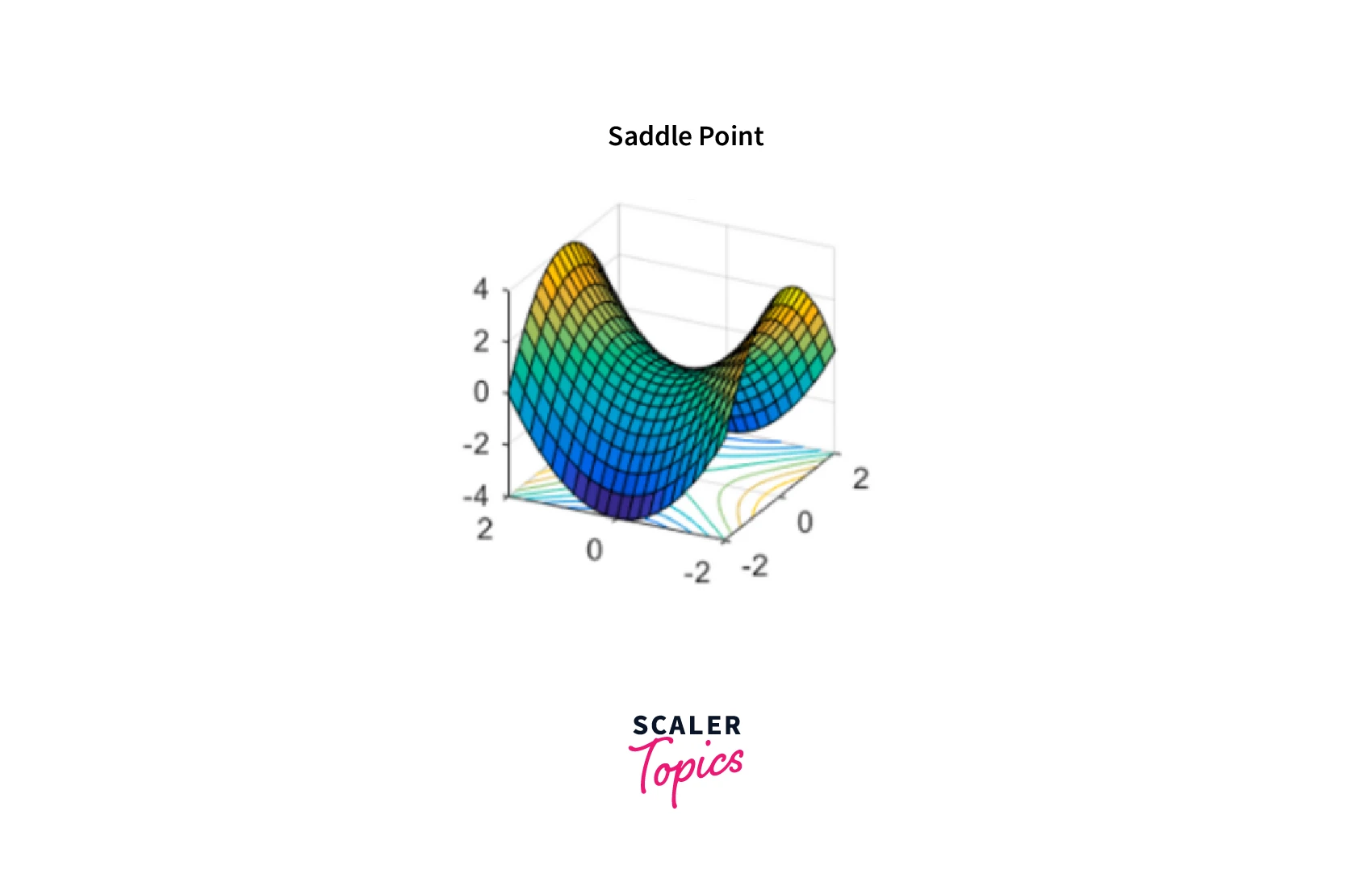

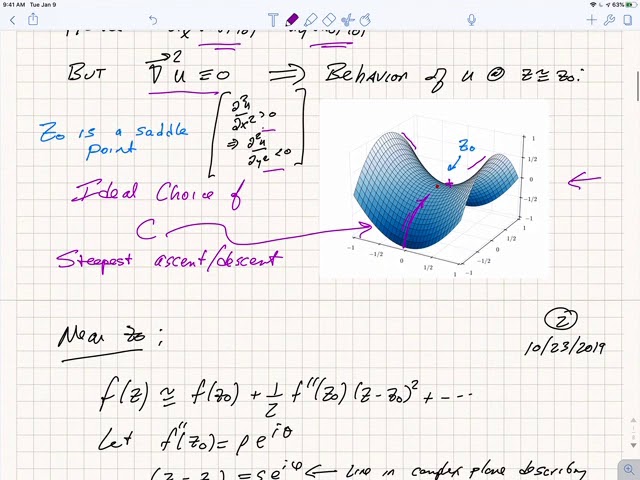

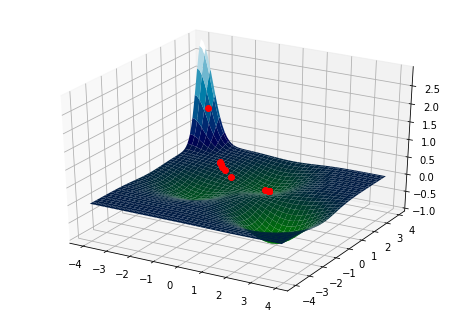

![4. Beyond Gradient Descent - Fundamentals of Deep Learning [Book] 4. Beyond Gradient Descent - Fundamentals of Deep Learning [Book]](https://www.oreilly.com/api/v2/epubs/9781491925607/files/assets/fodl_0405.png)